- English

- 日本語

What is caching?

Last updated 2022-05-02

A cache is a location that temporarily stores data for faster retrieval by the things that need to access it. Caching refers to the process of storing this data. You may have heard the term cache used in non-technical contexts. For example, campers and hikers may cache a food supply along a trail. Just think of a cache as a place to store something for convenience.

In a perfect world, a user visiting your website would have local access to all data assets needed to render the website. However, those assets are located elsewhere, like in a data center or cloud service, and there is a cost to retrieving those assets. The user pays in latency, the time required to generate and load the assets. You as the site owner pay a monetary cost not only to host your content but to move that content out of storage when requested, incurring what’s known as egress costs. And the more traffic your website receives, the more you have to pay to respond to those requests, some of which are the same.

Caching lets you store copies of your content to speed up delivery of that content while expending fewer resources.

How caching works

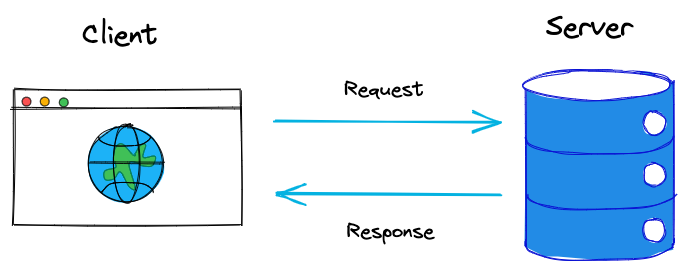

To understand how caching works, we need to understand how HTTP requests and responses work. When a user enters the URL to your website in their browser, a request is sent from their browser (the client) to the location where the content is located (your origin server). Your origin server processes the request and sends a response to the client.

While it may seem like this happens instantaneously, in reality it takes time for the origin server to process the request, generate the response, and send the response to the client. Additionally, many different requests are required to render your website, including requests for all the different data that make up your site (images, HTML web pages, and CSS files are just a few examples).

This is where a local cache comes into play. A user’s browser may cache some static assets, like the header image with your logo and the site's stylesheet, on the user’s personal computer to help improve performance the next time they access the site. However, this type of cache is only helpful to one user.

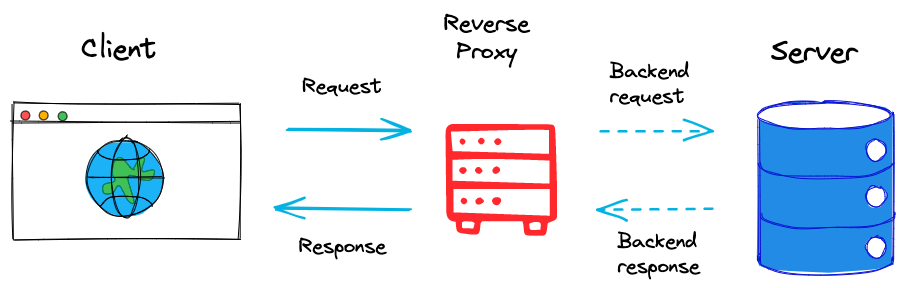

To cache assets for all users visiting your site, you might consider a remote cache like a reverse proxy. A reverse proxy is an application that sits between the client and your origin servers and receives and responds to requests on your behalf. You can install a reverse proxy on the same origin hosting your website or on a different server. The reverse proxy caches content from your origins so that subsequent requests can be served from cache.

HTTP headers are used to pass information between the client and origin server on the request and the response. You can set certain HTTP headers on the response to control which content is cached and for how long. When using a reverse proxy, the reverse proxy acts as an intermediary, stripping certain headers sent by the origin server and adding other headers to send to the client, all for the purpose of controlling how objects are cached. For more information about controlling how long to cache your resources, start with our documentation on cache freshness.

Benefits of caching with a reverse proxy

Remote caching via a reverse proxy lets you store a copy of assets so that subsequent requests from any user can be delivered immediately without having to wait for them to be generated. Caching an already-generated asset means that a request for that asset can be responded to immediately without your origin server needing to do any extra work. This creates a faster experience for your users, and it saves you money because you won't need to pay for that traffic to your origin. Your origin will still need to handle some requests, but not as many. Additionally, a reverse proxy can compress HTTP data before sending the response, which also makes for faster delivery.

Another way a reverse proxy optimizes the response time is by balancing the demand for content. If your website has a surge in popularity and starts getting thousands of concurrent requests, this can overload your origin server and crash your website. By serving content from cache, you can prevent all of that traffic hitting your origin at once. If you have more than one source origin, the reverse proxy can distribute the requests across those servers. In the event your website does go down, users can still access the cached content, so they won’t have to experience any downtime.

Why Fastly

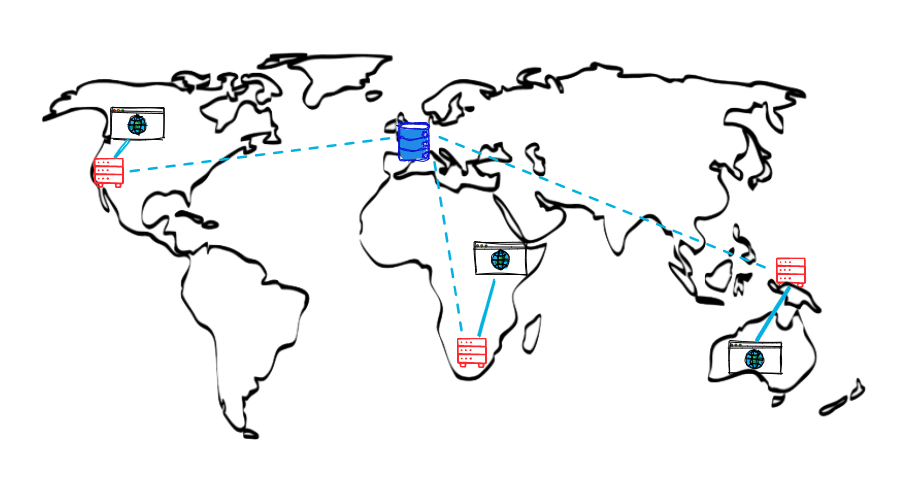

A CDN service like Fastly is a reverse proxy but on a greater scale. The time needed to generate and load content isn't the only factor that impacts how quickly a client receives a response to their request. The physical distance between a client and your origin also adds to the response time. A CDN consists of an entire network of cache servers that sit between clients and your origin servers. Each cache server in the network caches content from your origin and responds to requests from clients closest to them.

With Fastly’s CDN network, you can efficiently cache and deliver your content because the strategic geographic distribution of our points of presence (POPs) puts our caches closer to your users.

Fastly uses the Varnish Cache reverse proxy as an underlying architecture, which is not only fast but highly customizable. In our guide to CDNs, we talk about the difficulties of caching event-driven content because of its unpredictable nature. However, with Fastly you can cache this type of content and programmatically purge when content changes.

No matter how you’re caching content, it’s important to monitor how well it’s working. In general, you want to see more cache hits than cache misses. A cache hit means the requested content was found in the cache. A cache miss means the requested content was not found in cache and had to be retrieved from origin. While you can manually keep track of this information, Fastly has a real-time analytics dashboard where you can easily keep track of stats like cache hits, misses, and more.

One of the more useful stats Fastly automatically calculates is cache hit ratio. Generally speaking, cache hit ratio (CHR) is the ratio of requests delivered by the cache server (hits) to all requests (hits + misses). You want your cache hit ratio as high as possible because a high cache hit ratio means you've kept request traffic from hitting your origin unnecessarily. By monitoring the real-time stats, you can adjust your cache settings so that you have more hits than misses. You can also monitor several other stats to learn where you’re caching traffic, how much of your site is being cached, errors being served by your site, and more.

What’s next

Continue reading our essentials guides to learn how CDNs are used to cache at scale and why and how to purge cached content.

Once you're ready to start caching your content, check out our Introduction to Fastly's CDN tutorial for a real-life example for configuring caching that you can use with your own service. We also recommend reviewing our caching configuration best practices. For more information about Fastly’s real-time and historical stats and how you can use them to monitor your services, refer to About the Observability page.

Have you signed up for a Fastly account? There's no obligation and you can test up to $50 of traffic for free.

Have you signed up for a Fastly account? There's no obligation and you can test up to $50 of traffic for free.Do not use this form to send sensitive information. If you need assistance, contact support. This form is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.