- English

- 日本語

Caching configuration best practices

Last updated 2025-01-16

To ensure optimum origin performance during times of increased demand or during scheduled downtime for your servers, consider the following best practices for your service's caching configurations.

Integrate Fastly with your application platform

You can optimize caching with Fastly by customizing your application platform settings. For instructions, see our documentation on integrating third-party services and configuring web server software. We also provide a variety of plugins to help you directly integrate Fastly with your content management system.

Check your cache hit ratio

The number of requests delivered by a cache server, divided by the number of cacheable requests (hits + misses), is called the cache hit ratio. A high cache hit ratio means you've kept request traffic from hitting your origin unnecessarily. Requests come from cache instead. In general, you want your cache hit ratio as high as possible, usually in excess of 90%. You can check your hit ratio by viewing the Observability page for your service.

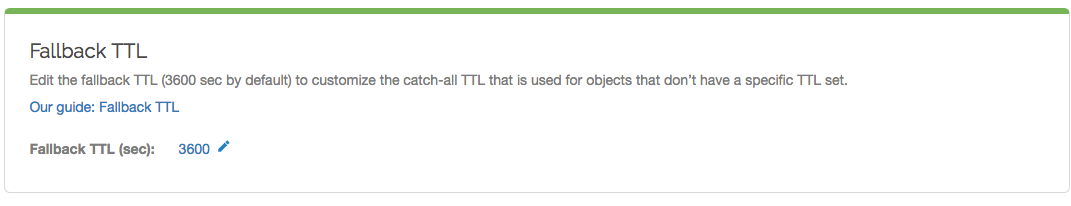

Set a fallback TTL

The amount of time information can be retained in cache memory is considered its time to live or TTL. TTL is set based on the cache related headers information returned from your origin server. You can specifically set a fallback TTL (sometimes called a default TTL).

WARNING

If you're using custom VCL, the fallback TTL will be specified in the VCL boilerplate and the fallback TTL configured via the Fastly control panel or the API will not be applied. Refer to our documentation on cache freshness and TTLs for more information.

TIP

If there's no other source of freshness in the response, setting the fallback TTL to 0 seconds in the Fastly control panel will set return(pass) in vcl_fetch.

We set a default fallback TTL that you can update at any time as follows:

- Log in to the Fastly control panel.

- From the Home page, select the appropriate service. You can use the search box to search by ID, name, or domain.

- Click Edit configuration and then select the option to clone the active version.

- Click Settings.

In the Fallback TTL area, click the pencil

next to the TTL setting.

next to the TTL setting.

In the Fallback TTL (sec) field, enter the new TTL in seconds.

Click Save to save your changes.

- Click Activate to deploy your configuration changes.

NOTE

See our Google Cloud Storage instructions if you're changing the default TTL for a GCS bucket.

Understand how cache control headers work

You can use cache control headers to set policies that determine how long your data is cached.

Fastly looks for caching information in each of these headers as described in our documentation on cache freshness. In order of preference:

Surrogate-Control:Cache-Control: s-maxageCache-Control: max-ageExpires:

Surrogate headers

Surrogate headers are a relatively new addition to the cache management vocabulary (described in this W3C tech note). These headers provide a specific cache policy for proxy caches in the processing path. Surrogate-Control accepts many of the same values as Cache-Control, plus some other more esoteric ones (read the tech note for all the options).

One use of this technique is to provide conservative cache interactions to the browser (for example, Cache-Control: no-cache). This causes the browser to re-validate with the source on every request for the content. This makes sure that the user is getting the freshest possible content. Simultaneously, a Surrogate-Control header can be sent with a longer max-age that lets a proxy cache in front of the source handle most of the browser traffic, only passing requests to the source when the proxy's cache expires.

With Fastly, one of the most useful Surrogate headers is Surrogate-Key. When Fastly processes a request and sees a Surrogate-Key header, it uses the space-separated value as a list of tags to associate with the request URL in the cache. Combined with Fastly's Purge API an entire collection of URLs can be expired from the cache in one API call (and typically happens in around 1ms). Surrogate-Control is the most specific.

Cache-Control directives

Cache-Control response directives, as defined by section 5.2.2 of RFC 9111, include:

Cache-Control: public- Any cache can store a copy of the content. You don't need to add thepublicdirective to a response as most are cacheable by default.Cache-Control: private- Don't store. This is intended for a single user.Cache-Control: no-cache- Re-validate before serving this content. This is ignored by Fastly.Cache-Control: no-store- Don't ever cache this content in a web browser. This is ignored by Fastly.Cache-Control: max-age=[seconds]- Caches can store this content for n seconds.Cache-Control: s-maxage=[seconds]- Same as max-age but applies specifically to proxy caches.

Only the max-age, s-maxage, and private Cache-Control directives will influence Fastly's caching. All other Cache-Control directives will not, but will be passed through to the browser. For more in-depth information about how Fastly responds to these cache control headers and how these headers interact with Expires and Surrogate-Control, check out our documentation on HTTP caching semantics.

Expires header

The Expires header tells the cache (typically a browser cache) how long to hang onto a piece of content. Thereafter, the browser will re-request the content from its source. The downside is that it's a static date and if you don't update it later, the date will pass and the browser will start requesting that resource from the source every time it sees it.

Fastly will respect the Expires header value only if the Surrogate-Control or Cache-Control headers are not found in the request.

Increase Cache-Control header times

During times of increased demand, you can instruct Fastly to keep objects in cache as long as possible by increasing the times you set on your Cache-Control headers. Consider changing the max-age on your Cache-Control or Surrogate-Control headers. Our documentation on HTTP caching semantics describes this in more detail.

Configure Fastly to temporarily serve stale content

If your origin becomes unavailable for an extended period of time (for example, being taken offline for maintenance purposes), temporarily serving stale content may help you. Serving stale content can also benefit you if your site's static content is updated or published quite frequently.

You can instruct Fastly to serve stale content by adding a stale-while-revalidate or stale-if-error statement on your Cache-Control or Surrogate-Control headers. Our guide to serving stale content describes this in more detail.

Decrease your first byte timeout time

After you have configured Fastly to temporarily serve stale, decreasing your first byte timeout time will cause stale content to be served to the requestor faster while fetching fresh content from the origin. Decreasing your first byte timeout time as well as serving stale will reduce unnecessary 503 first byte timeout errors. Decrease the first byte timeout time to your origin as follows:

- Log in to the Fastly control panel.

- From the Home page, select the appropriate service. You can use the search box to search by ID, name, or domain.

- Click Edit configuration and then select the option to clone the active version.

- Click Origins.

- In the Hosts area, find your origin server and click the pencil

to edit the host.

to edit the host. - Click Advanced options at the bottom of the page.

- In the First byte timeout field, enter the new first byte timeout in milliseconds. Approximately 15000 milliseconds is a good default to start with.

- Click Update to save your changes.

- Click Activate to deploy your configuration changes.

Configure caching actions for specific workflows

When you create a cache setting, the Action setting determines how the request will be handled. The action settings and the most common workflow for each are described below.

Do nothing now - Selecting this option will apply the request setting options, but won't force a lookup or a pass action.

Pass (do not cache) - Selecting this option will make the request and subsequent response bypass the cache and go straight to origin. Use this option if you need to conditionally prevent pages from caching or when using conditions

Restart processing (not common) - Selecting this option will restart processing of the request. Use this option if you need to check multiple backends for a single request

Deliver (not common) - Selecting this option will deliver the object to the client. Use this option if you need to create an override condition.

Consider custom error handling

When downtime can't be avoided, standard error messages might not ensure the best user experience. Consider creating custom error messages that include information specific to the request being made and pertinent to the user. Our guide to creating error pages with custom responses provides more detail.

HTTP status codes cached by default

Fastly caches the following response status codes by default. In addition to these statuses, you can force an object to cache under other states using conditions and responses.

| Code | Message |

|---|---|

200 | OK |

203 | Non-Authoritative Information |

300 | Multiple Choices |

301 | Moved Permanently |

302 | Moved Temporarily |

404 | Not Found |

410 | Gone |

TIP

You can override caching defaults based on a backend response. For example, if you don't want 404 error responses to be cached for the full caching period of a day, you could add a cache object and then create conditions for it.

To cache status codes other than the ones listed above, set beresp.cacheable = true; in vcl_fetch. This tells Varnish to obey backend HTTP caching headers and any other custom ttl logic. A common pattern is to allow all 2XX responses to be cacheable:

1234567sub vcl_fetch { # ... if (beresp.status >= 200 && beresp.status < 300) { set beresp.cacheable = true; } # ...}Inform Fastly Customer Support

We like to be sure we're readily available for assistance during customer events. When you know in advance that an event is forthcoming, contact support with details. Be sure to include details about:

- the date and time of the event

- the type of event happening

- how long you expect it to last (if it's planned)

- the Fastly services that might be affected

If the event you're planning is designed to validate the security of your service behind Fastly, be sure to read our guide to security testing first.

Do not use this form to send sensitive information. If you need assistance, contact support. This form is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.